Cloud & Infrastructure · DevOps

WebAssembly vs Docker: The Replacement That Never Happened (And Why That's the Point)

Everyone said WebAssembly would kill Docker. Two years later, they coexist — and the teams using both are shipping faster. A reality check on Wasm, containers, and the containerd shim approach.

Anurag Verma

16 min read

Sponsored

The future of containers was supposed to be containerless. Reality had other plans.

The future of containers was supposed to be containerless. Reality had other plans.

The demo was perfect. November 2024. A DevOps conference. The speaker spun up a WebAssembly module on stage — cold start under a millisecond, binary size 1.2 MB, running a REST API that returned JSON. The crowd loved it. Someone in the front row tweeted “Docker is dead.” That tweet got 14,000 likes.

Then we tried to do it for real.

Our team spent six weeks attempting to migrate a client’s microservices architecture — eleven services, PostgreSQL, Redis, a message queue, a few background workers — from Docker Compose to a Wasm-native stack. By week three, we’d successfully ported exactly two of the eleven services. The rest hit walls: filesystem access patterns that WASI didn’t support cleanly, native library dependencies that had no Wasm compilation target, a Python service that we’d need to rewrite entirely, and an authentication service that relied on OpenSSL bindings that produced a 47 MB Wasm binary (so much for “lightweight”).

We abandoned the full migration. Instead, we ended up running those two ported services as Wasm modules alongside the other nine Docker containers, all orchestrated by the same Kubernetes cluster using containerd shims. It worked. It worked well. And it taught us the lesson that the conference speakers weren’t telling anyone: WebAssembly doesn’t replace Docker. It never will. And the teams that stop trying to make it a replacement and start treating it as a complement are the ones getting actual value from both.

The Prophecy That Wasn’t

Let’s be fair to the hype. It came from credible sources.

Solomon Hykes, the co-founder of Docker himself, tweeted in 2019: “If WASM+WASI existed in 2008, we wouldn’t have needed to create Docker.” That quote has been repeated in approximately every WebAssembly talk since. It’s technically interesting. It’s also been wildly misinterpreted.

Hykes was making a point about the elegance of the abstraction — a universal binary format with a capability-based security model is a beautiful idea. He was not saying “everyone should stop using Docker.” But the tech media ecosystem doesn’t do nuance. The narrative became: WebAssembly will replace containers. Full stop.

By 2024-2025, the Wasm ecosystem had matured significantly. WASI Preview 2 (now called WASI 0.2) landed with the Component Model, giving Wasm modules a standardized way to do I/O, networking, and filesystem operations. Fermyon’s Spin, Cosmonic’s wasmCloud, and other frameworks made it genuinely easy to build and deploy Wasm-based services. Fastly, Cloudflare, and Shopify were running Wasm in production at massive scale — at the edge.

The technology was real. The mistake was in the framing. “WebAssembly vs Docker” was never the right question. It’s like asking “screwdrivers vs hammers.” They’re both tools. They solve different problems. Sometimes the same project needs both.

Where WebAssembly Actually Wins

Let’s get specific. After two years of deploying both technologies in production across multiple client projects, here is where Wasm delivers value that Docker genuinely cannot match.

Edge Computing

This is Wasm’s home court. When you need to run code at CDN edge nodes — Cloudflare Workers, Fastly Compute, Vercel Edge Functions — Wasm is the only serious option. Docker containers take seconds to cold-start. Wasm modules start in microseconds. At the edge, where you might be spinning up an instance per-request across hundreds of global locations, that difference isn’t incremental. It’s the difference between viable and not viable.

We built an image transformation pipeline for an e-commerce client that runs entirely as Wasm at the edge. Request comes in, Wasm module resizes and optimizes the image, response goes out. P99 latency: 23ms. The equivalent Docker-based approach they were running before had a P99 of 340ms because of cold starts and the overhead of spinning up a full container runtime. That’s not a marginal improvement. That’s a different product experience.

Plugin and Extension Systems

If you’re building a platform where users or third parties provide custom logic — think Shopify functions, game mod systems, data transformation pipelines — Wasm is the right answer. You get true sandboxing (the module can only access what you explicitly grant it), deterministic execution, and language agnosticism (the plugin author can write in Rust, Go, C, or any language that compiles to Wasm).

Docker can do isolation too, obviously. But spinning up a Docker container for every plugin invocation is absurdly heavyweight. The overhead per invocation is measured in hundreds of milliseconds and tens of megabytes. Wasm’s overhead is measured in microseconds and kilobytes. For a platform executing thousands of plugin calls per second, the math isn’t even close.

// A Wasm plugin interface using the Component Model

// Plugin authors implement this, platform hosts execute it

wit_bindgen::generate!({

world: "price-calculator",

exports: {

"pricing/engine": PriceEngine

}

});

struct PriceEngine;

impl exports::pricing::engine::Guest for PriceEngine {

fn calculate(item: Item, context: PricingContext) -> Price {

let base = item.base_price;

let discount = match context.customer_tier {

Tier::Premium => 0.15,

Tier::Standard => 0.05,

Tier::New => 0.10,

};

Price {

amount: base * (1.0 - discount),

currency: context.currency,

}

}

}Sandboxed Execution in Existing Applications

Need to run untrusted code safely? Wasm’s security model — deny by default, explicit capability grants — is fundamentally stronger than Docker’s. Containers share the host kernel. A container escape is a real vulnerability class that security teams actively worry about. Wasm modules run in a virtual machine that doesn’t share the host kernel, doesn’t have access to the filesystem unless you grant it, and can’t make network calls unless you provide the capability.

We embedded wasmtime into a Node.js application for a client that needed to execute user-submitted data transformation scripts. Previously they were using vm2 (which had known sandbox escapes) inside Docker containers (defense in depth). With Wasm, the sandboxing is built into the execution model. We dropped Docker entirely for that specific workload and reduced infrastructure costs by 60%.

Where Docker Still Wins (And Will Keep Winning)

Here’s the part that Wasm advocates tend to gloss over. Docker and OCI containers have advantages that aren’t going away, because they’re rooted in fundamentally different design choices.

Full Operating System Access

Docker containers run a real Linux userspace. Your application can assume the existence of a filesystem, environment variables, /dev/urandom, shared libraries, Unix sockets, system calls — all of it. WASI is getting better, but it’s still an abstraction over a subset of OS functionality. Real-world applications, especially legacy ones, depend on OS-level features that WASI doesn’t cover.

Try running Puppeteer in Wasm. Or FFmpeg with hardware acceleration. Or anything that links against glibc and expects a Linux ABI. These aren’t edge cases — they’re Tuesday for most backend teams.

The Ecosystem

This is Docker’s unassailable moat, at least for the foreseeable future. Docker Hub has over 15 million container images. Every major database, cache, queue, monitoring tool, and middleware has an official Docker image. docker compose up and you have Postgres, Redis, Elasticsearch, and Kafka running locally in under a minute.

The Wasm ecosystem has… a few hundred packages on wa.dev, the component model registry. Growing, yes. But growing from “a few hundred” to “fifteen million” is not a gap that closes in a year or two. And many of those Docker images wrap software written in C, C++, or Java with complex native dependencies that can’t trivially compile to Wasm.

Existing Workflows and Team Knowledge

Every DevOps engineer knows Docker. Every CI/CD pipeline speaks Docker. Kubernetes, the most widely deployed orchestration platform, is built around the container abstraction. Dockerfiles are imperfect, but they’re understood. Teams have years of muscle memory around docker build, docker push, multi-stage builds, layer caching, and debugging containers.

Asking a team to rewrite their deployment pipeline for Wasm is a real cost. Not just the engineering hours — the risk, the learning curve, the tooling gaps, the debugging stories that haven’t been written yet. For most workloads that are happily running in Docker today, the ROI of switching to Wasm is negative.

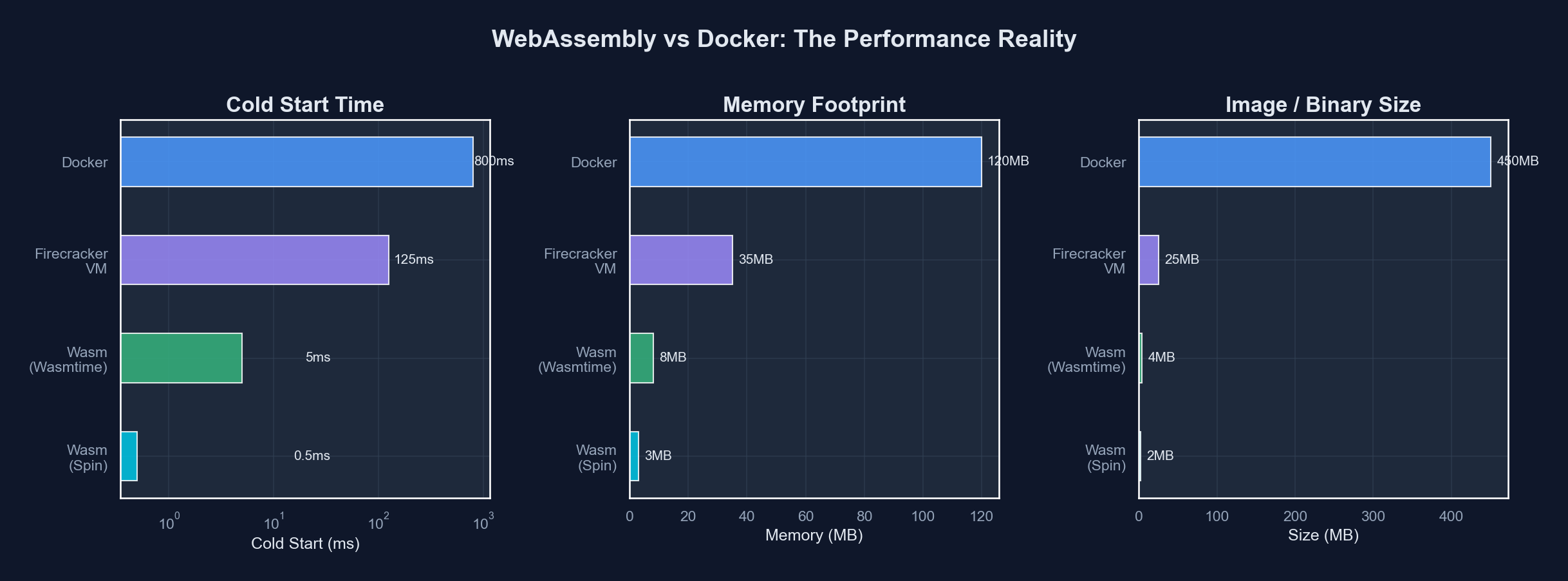

The Head-to-Head Comparison

Here’s where things stand in early 2026, measured honestly rather than aspirationally.

| Dimension | Docker / OCI Containers | WebAssembly (WASI) |

|---|---|---|

| Cold start time | 500ms - 5s | < 1ms - 10ms |

| Binary / image size | 50MB - 2GB+ | 500KB - 20MB |

| Security isolation | Process-level (shared kernel) | VM-level (no kernel sharing) |

| Language support | Any (runs Linux binaries) | Rust, C/C++, Go, JS (with caveats) |

| OS feature access | Full Linux userspace | WASI subset (growing) |

| Ecosystem maturity | 15M+ images, 10+ years | Hundreds of components, 2-3 years |

| Debugging tooling | Mature (exec, logs, strace) | Improving but gaps remain |

| GPU / hardware access | Full (device passthrough) | Experimental at best |

| Production track record | Decade+ across every industry | Edge computing proven, general backend emerging |

| Team familiarity | Universal in DevOps | Niche, growing |

And for workload-specific guidance:

| Workload Type | Best Fit | Why |

|---|---|---|

| Edge functions / CDN logic | Wasm | Microsecond cold starts, tiny binaries |

| Plugin / extension systems | Wasm | Sandboxing, per-invocation efficiency |

| Untrusted code execution | Wasm | Capability-based security model |

| Full-stack web applications | Docker | OS access, library ecosystem, existing tooling |

| Database / stateful services | Docker | Filesystem, networking, existing images |

| ML / GPU workloads | Docker | Hardware access, CUDA, mature tooling |

| Local development environments | Docker | Compose, ecosystem, team familiarity |

| CI/CD pipeline steps | Both | Docker for complex builds, Wasm for fast validation tasks |

| Serverless functions | Both | Wasm for latency-sensitive, Docker for dependency-heavy |

The Containerd Shim: How They Actually Coexist

The most important technical development in this space isn’t a Wasm feature or a Docker feature. It’s the containerd-wasm-shim — the bridge that lets Kubernetes run Wasm workloads alongside traditional containers in the same cluster, managed by the same orchestration layer.

Here’s how it works. Containerd, the container runtime that underlies Docker and Kubernetes, supports pluggable “shims” — small processes that manage the lifecycle of a workload. The default shim (containerd-shim-runc-v2) uses runc to run OCI containers. The Wasm shims (containerd-shim-wasmtime-v1, containerd-shim-spin-v2, etc.) use a Wasm runtime instead.

From Kubernetes’ perspective, both are just pods. Same scheduling, same networking, same service discovery, same monitoring. The difference is entirely in the runtime layer.

# Kubernetes pod spec running a Wasm workload via containerd shim

apiVersion: apps/v1

kind: Deployment

metadata:

name: edge-processor

spec:

replicas: 3

selector:

matchLabels:

app: edge-processor

template:

metadata:

labels:

app: edge-processor

spec:

runtimeClassName: wasmtime # This is the key line

containers:

- name: processor

image: ghcr.io/myorg/edge-processor:latest

# This "image" is actually a Wasm module packaged as an OCI artifact

resources:

limits:

memory: "64Mi"

cpu: "100m"# RuntimeClass definition that tells Kubernetes to use the Wasm shim

apiVersion: node.k8s.io/v1

kind: RuntimeClass

metadata:

name: wasmtime

handler: wasmtime

scheduling:

nodeSelector:

kubernetes.io/arch: wasm-wasi # Nodes with the Wasm shim installedThis is the pattern we’ve standardized on for clients who want to use Wasm in production. You don’t rip out Docker. You don’t rewrite your deployment pipeline. You add a RuntimeClass, install the shim on your nodes (most managed Kubernetes providers support this now — AKS has had it since 2024, EKS and GKE added support in 2025), and deploy Wasm workloads alongside your existing containers.

The containerd shim approach: Wasm and Docker containers running side by side, orchestrated by the same Kubernetes control plane.

The containerd shim approach: Wasm and Docker containers running side by side, orchestrated by the same Kubernetes control plane.

Real Production Use Cases (Not Conference Demos)

Let me share three actual deployments we’ve done where the Docker-plus-Wasm coexistence model is running in production right now.

1. E-Commerce Personalization at the Edge

A retail client with global traffic needed request-level personalization — pricing rules, A/B test routing, content localization — executed as close to the user as possible. The core application (product catalog, checkout, inventory) runs as Docker containers in a central Kubernetes cluster. The personalization logic runs as Wasm modules deployed to Cloudflare Workers across 300+ edge locations.

The Wasm modules are compiled from Rust, each under 2 MB. They read configuration from a KV store, apply business rules to the incoming request, and either modify headers (for the A/B routing) or rewrite response bodies (for pricing and content). Cold start: sub-millisecond. The central services still run in Docker because they need PostgreSQL connections, Redis caching, and all the dependencies that a full backend requires.

2. Data Pipeline with User-Defined Transformations

A data analytics platform needed to let customers write custom transformation functions that execute within the pipeline. Security was paramount — customer code runs on shared infrastructure, and a sandbox escape would be catastrophic.

The pipeline infrastructure (Kafka, Flink, storage) runs in Docker containers on Kubernetes. Customer transformation functions are compiled to Wasm components and executed via wasmtime embedded in the pipeline workers. Each function gets explicit capabilities: read access to the input stream, write access to the output stream, nothing else. No filesystem. No network. No ambient authority.

# Customer-facing SDK (Python) for writing transformation functions

# This compiles to a Wasm component via componentize-py

from pipeline_sdk import transform, Record

@transform

def enrich_event(record: Record) -> Record:

"""Add computed fields to each event record."""

record.fields["session_duration_minutes"] = (

record.fields["session_end"] - record.fields["session_start"]

) / 60.0

if record.fields["session_duration_minutes"] > 30:

record.fields["engagement_tier"] = "high"

else:

record.fields["engagement_tier"] = "standard"

return recordBefore Wasm, they were running customer code in gVisor-sandboxed Docker containers. The cold start per invocation was 800ms. With Wasm, it’s 3ms. At 50,000 transformations per second, that difference eliminated the need for a massive pre-warming pool of containers.

3. IoT Gateway with OTA-Updatable Logic

An industrial IoT client runs gateway devices in manufacturing facilities. The gateways collect sensor data, apply validation and alerting rules, and forward data to the cloud. The gateway OS and core services run in Docker containers (on embedded Linux). The validation and alerting logic runs as Wasm modules that can be updated over-the-air without restarting the gateway.

Why Wasm for the logic layer? Because pushing a new Docker image to 2,000 field-deployed gateways, pulling it over cellular connections, and restarting the container runtime is a 45-minute operation with real downtime risk. Pushing a 400KB Wasm module, hot-swapping it in the embedded runtime, takes 8 seconds with zero downtime.

The Decision Framework

After going through this with multiple clients, we’ve landed on a simple set of questions to determine which runtime fits a given workload.

Use Wasm when:

- Cold start latency matters (sub-10ms requirement)

- You’re running at the edge (CDN, IoT, embedded)

- You need to execute untrusted or third-party code

- Binary size is constrained (bandwidth-limited deployment)

- You’re building a plugin or extension system

- Per-invocation overhead must be minimal (high-frequency execution)

Use Docker when:

- The application depends on native OS features or system calls

- You need GPU, hardware accelerator, or device access

- The dependency tree includes libraries without Wasm targets

- Your team’s tooling and expertise is container-native

- You’re running stateful services (databases, queues, caches)

- The existing solution works and doesn’t have a latency or size problem

Use both when:

- You have a mix of workloads with different performance profiles

- Edge and origin serve different roles in your architecture

- You want to incrementally adopt Wasm without a full migration

- Your platform needs sandboxed plugin execution alongside general backend services

What’s Actually Coming Next

I want to end with predictions, but grounded ones rather than hype-cycle ones.

WASI 0.3 and async support will land in 2026 and will meaningfully expand what Wasm can do on the server side. The ability to handle async I/O natively (rather than through awkward blocking shims) removes one of the biggest practical barriers to porting existing services. This is a big deal, but it won’t suddenly make Wasm a Docker replacement — it will make it a better complement.

The Component Model ecosystem will grow, but slowly. Building reusable Wasm components that work across runtimes and languages is genuinely hard engineering. The registry and tooling are improving, but “npm for Wasm” is still years away.

Managed Kubernetes providers will continue expanding Wasm shim support, making the coexistence pattern easier to adopt. By the end of 2026, running mixed Docker-and-Wasm workloads in the same cluster will be unremarkable.

Docker itself will continue integrating Wasm. Docker Desktop already supports running Wasm containers via containerd shims. The docker run --runtime=io.containerd.wasmtime.v1 workflow will get smoother. Docker isn’t fighting Wasm — they’re absorbing it.

The Unsexy Truth

The most productive framing for WebAssembly in 2026 is not “the future of containers” or “Docker killer” or any of the other narrative frames that generate conference talk submissions and Twitter engagement. It’s this: WebAssembly is a specialized runtime with specific strengths that complement container-based infrastructure.

That’s not a very exciting sentence. It won’t get 14,000 likes. But it’s the sentence that leads to good engineering decisions — the kind where you pick the right tool for the workload, deploy it using proven patterns, and ship features instead of fighting your infrastructure.

The teams that figured this out eighteen months ago are quietly running hybrid architectures that are faster, cheaper, and more secure than either pure-Docker or pure-Wasm approaches. They didn’t wait for one technology to “win.” They let each technology do what it’s good at.

That’s not a compromise. That’s engineering.

Sponsored

More from this category

More from Cloud & Infrastructure

R.01

R.01 Blue-Green and Canary Deployments: A Production Guide for Engineering Teams

R.02

R.02 eBPF in 2026: The Observability Superpower Hiding in Your Linux Kernel

R.03

R.03 Tailscale for Distributed Dev Teams: Private Networks Without the VPN Pain

Sponsored

Discussion

Join the conversation.

Comments are powered by GitHub Discussions. Sign in with your GitHub account to leave a comment.

Sponsored