Case study

ChatCops

An open-source, embeddable AI chat widget that connects to Claude, OpenAI, or Gemini. One script tag, zero dependencies, full control — with lead capture, knowledge base, i18n, and multi-platform deployment.

Overview

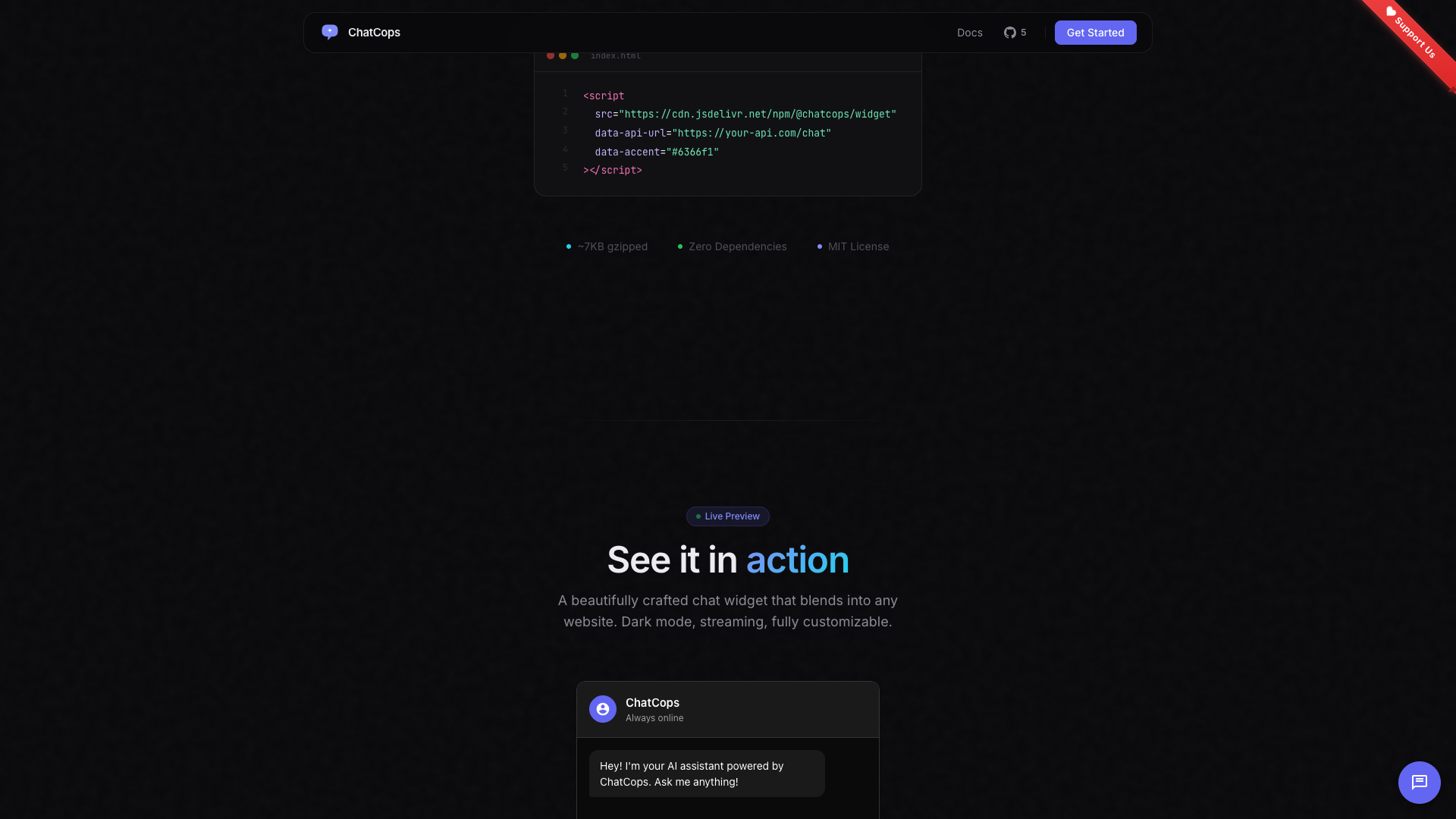

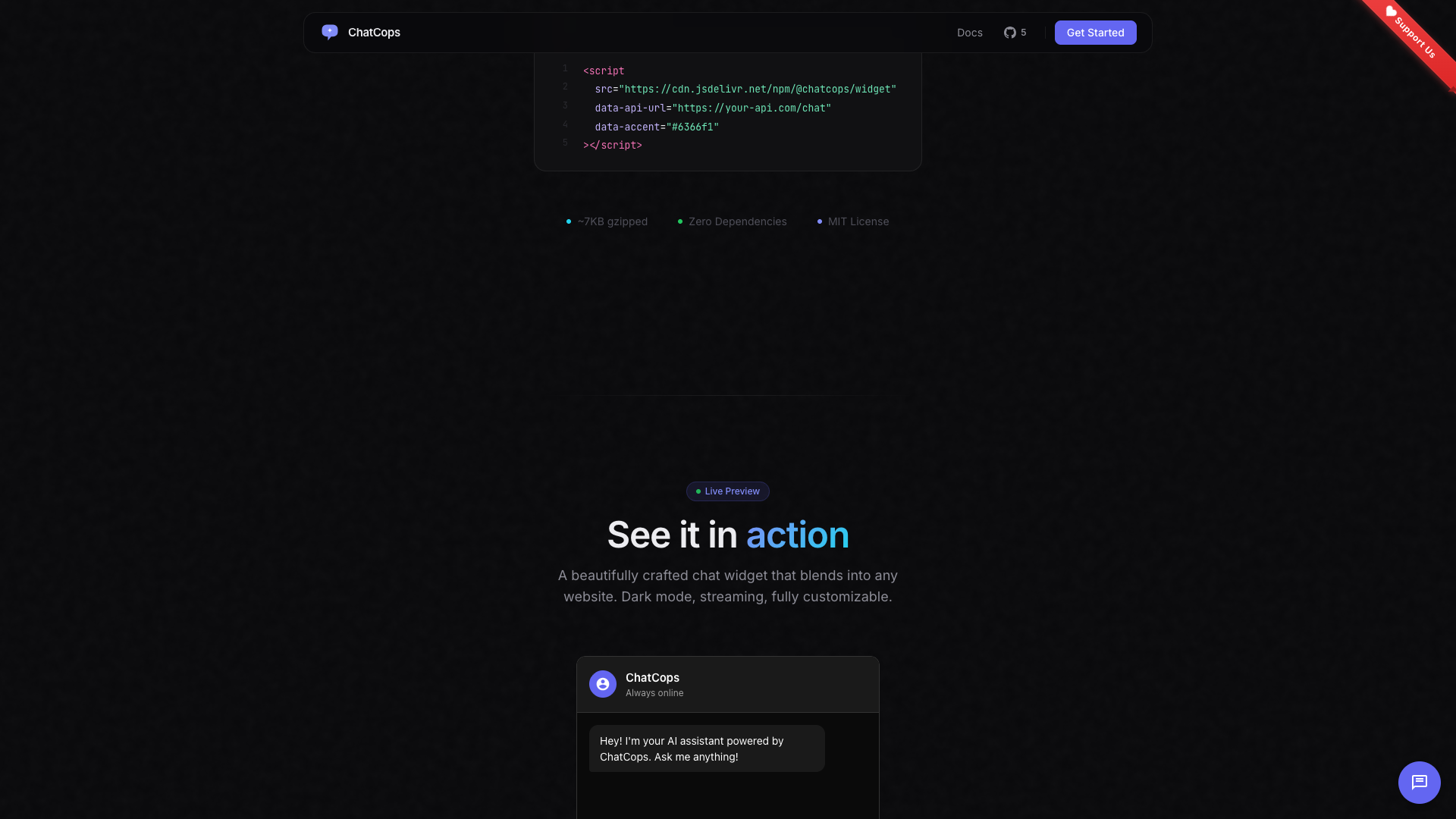

ChatCops is a free, open-source AI chat widget that drops into any website with a single script tag. It connects to Claude, OpenAI, or Gemini — letting site owners add an intelligent chatbot without building infrastructure from scratch. The widget ships at ~7KB gzipped with zero dependencies, uses Shadow DOM for complete style isolation, and streams responses token-by-token via Server-Sent Events for a natural conversational feel.

Beyond basic chat, ChatCops includes built-in lead capture with webhook support, knowledge base integration for context-aware responses, conversation persistence, internationalization across 8 languages, and server adapters for Express, Vercel, and Cloudflare Workers.

Challenge

Adding AI chat to a website typically means choosing between expensive SaaS products with vendor lock-in, or building a full chat infrastructure from scratch — frontend widget, backend proxy, provider integration, streaming, persistence, and deployment. Most solutions also lock you into a single AI provider, making it impossible to switch between Claude, OpenAI, or Gemini without rewriting your backend. We needed a solution that was genuinely open, provider-agnostic, and deployable anywhere with minimal effort.

Solution

We built ChatCops as a two-part system: a lightweight client widget and a flexible server middleware — both designed for zero-friction setup.

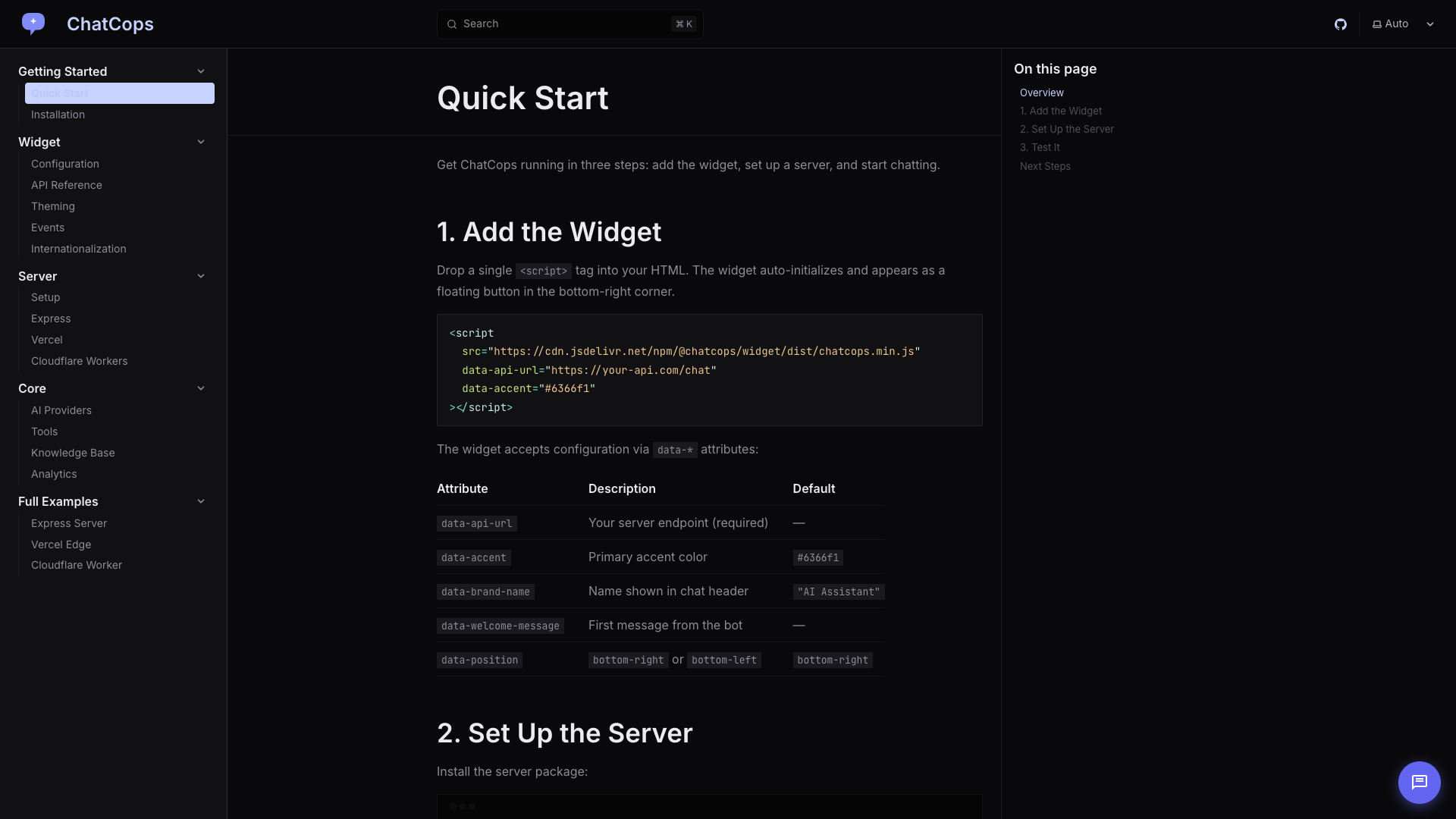

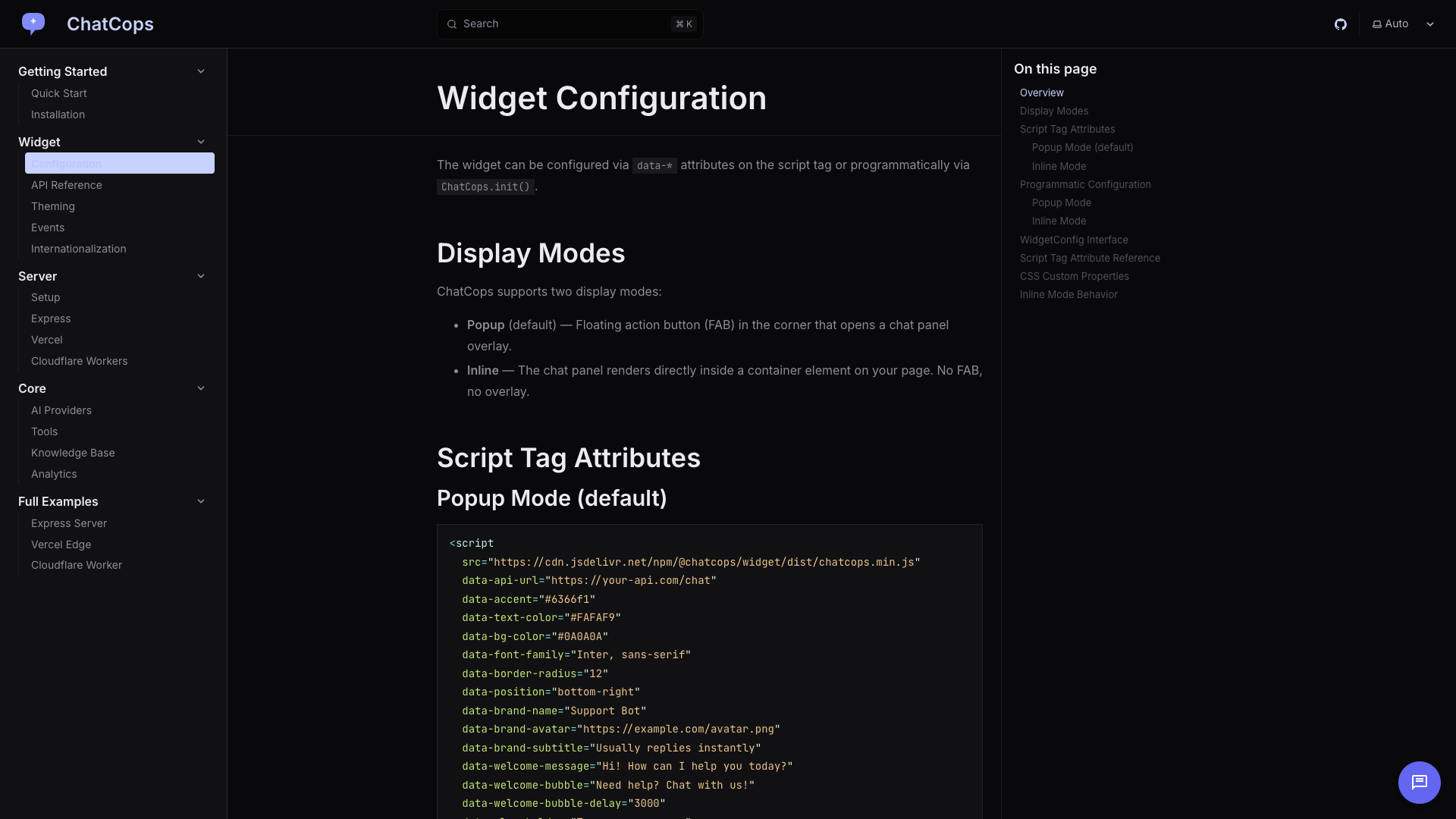

One Script Tag Setup

The widget initializes from a single <script> tag with configuration via HTML data-* attributes. No build step, no npm install, no framework dependency. It creates an isolated Shadow DOM container, so it never conflicts with existing site styles. Configuration covers everything from accent colors and branding to welcome messages and display modes (popup FAB or inline embed).

Multi-Provider Architecture

The server middleware abstracts AI providers behind a unified interface. Switching from Claude to OpenAI to Gemini requires changing a single environment variable — no code changes. Each provider adapter handles the differences in API format, streaming protocol, and token counting. The server proxies requests so API keys never touch the client.

Real-Time Streaming

Responses stream token-by-token via Server-Sent Events. The widget renders markdown in real-time as tokens arrive, including code blocks with syntax highlighting. This gives users immediate feedback and a natural conversational experience rather than waiting for complete responses.

Lead Capture & Knowledge Base

The built-in lead capture system collects visitor contact information before or during chat, with webhook integration to push leads to CRMs or notification systems. The knowledge base feature lets site owners provide text chunks that the AI uses as context — enabling accurate, domain-specific responses without fine-tuning.

Deploy Anywhere

Server adapters for Express, Vercel Edge Functions, and Cloudflare Workers mean ChatCops runs on whatever infrastructure you already use. Each adapter is a thin wrapper around the core middleware, handling platform-specific request/response patterns while sharing all business logic.

Tech Stack

- Widget: Vanilla JavaScript with Shadow DOM isolation (~7KB gzipped)

- Server: Node.js middleware with platform adapters (Express, Vercel, Cloudflare)

- AI Providers: Anthropic Claude, OpenAI, Google Gemini — unified interface

- Streaming: Server-Sent Events (SSE) for real-time token delivery

- Persistence: localStorage by default, pluggable server-side stores

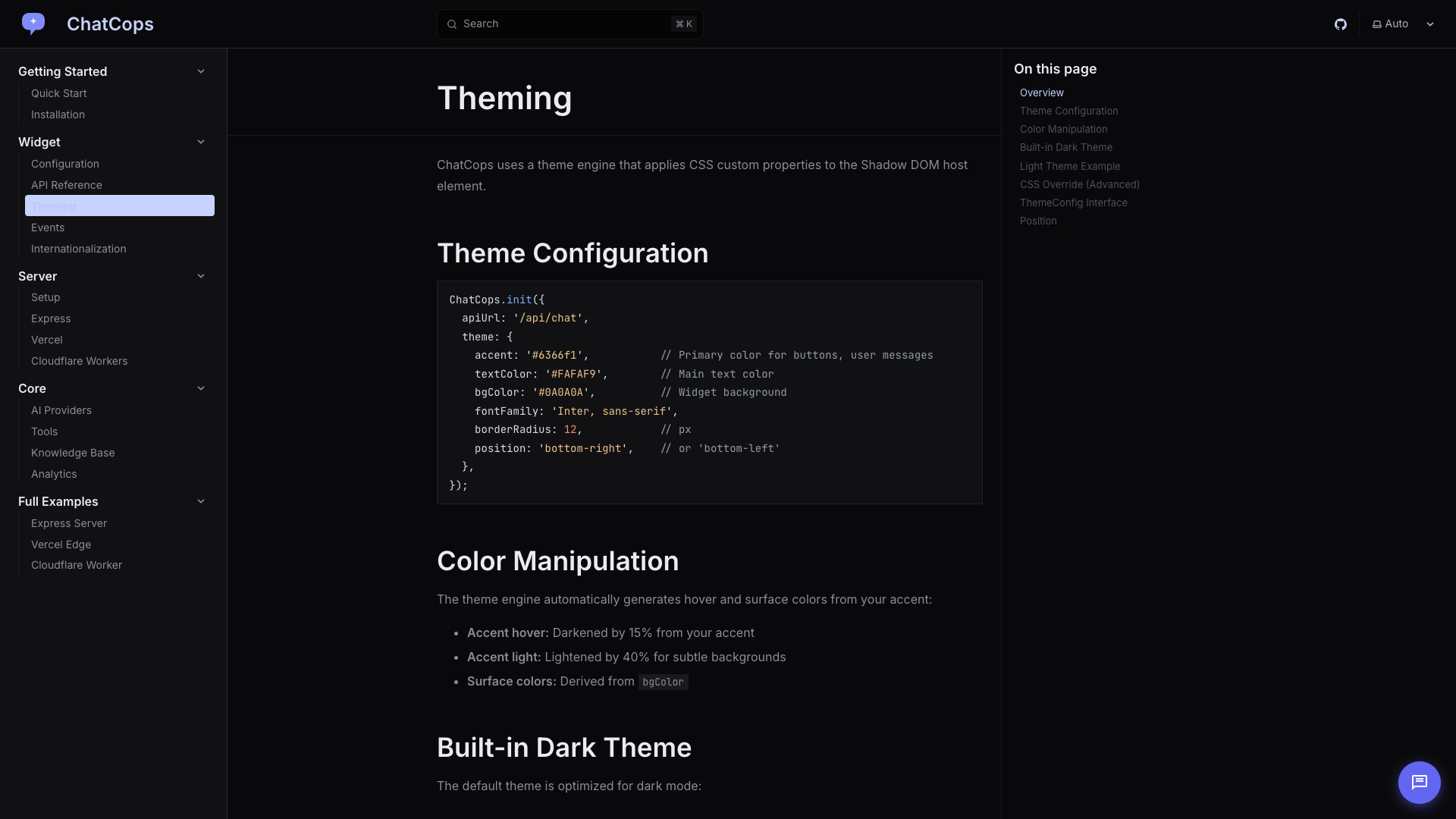

- Documentation: Custom docs site at chat.codercops.com

- License: MIT — fully open source

Results

- ~7KB gzipped widget with zero runtime dependencies

- 3 AI providers supported with a single environment variable switch

- 8 languages built-in for internationalization

- 3 deployment targets — Express, Vercel, and Cloudflare Workers

- Open-source on GitHub with MIT license

- Complete documentation covering quick start, configuration, theming, providers, and full deployment examples

Plates

Plate · 01

Plate · 01  Plate · 02

Plate · 02  Plate · 03

Plate · 03  Plate · 04

Plate · 04 Also from the bench

All work →

Web Development

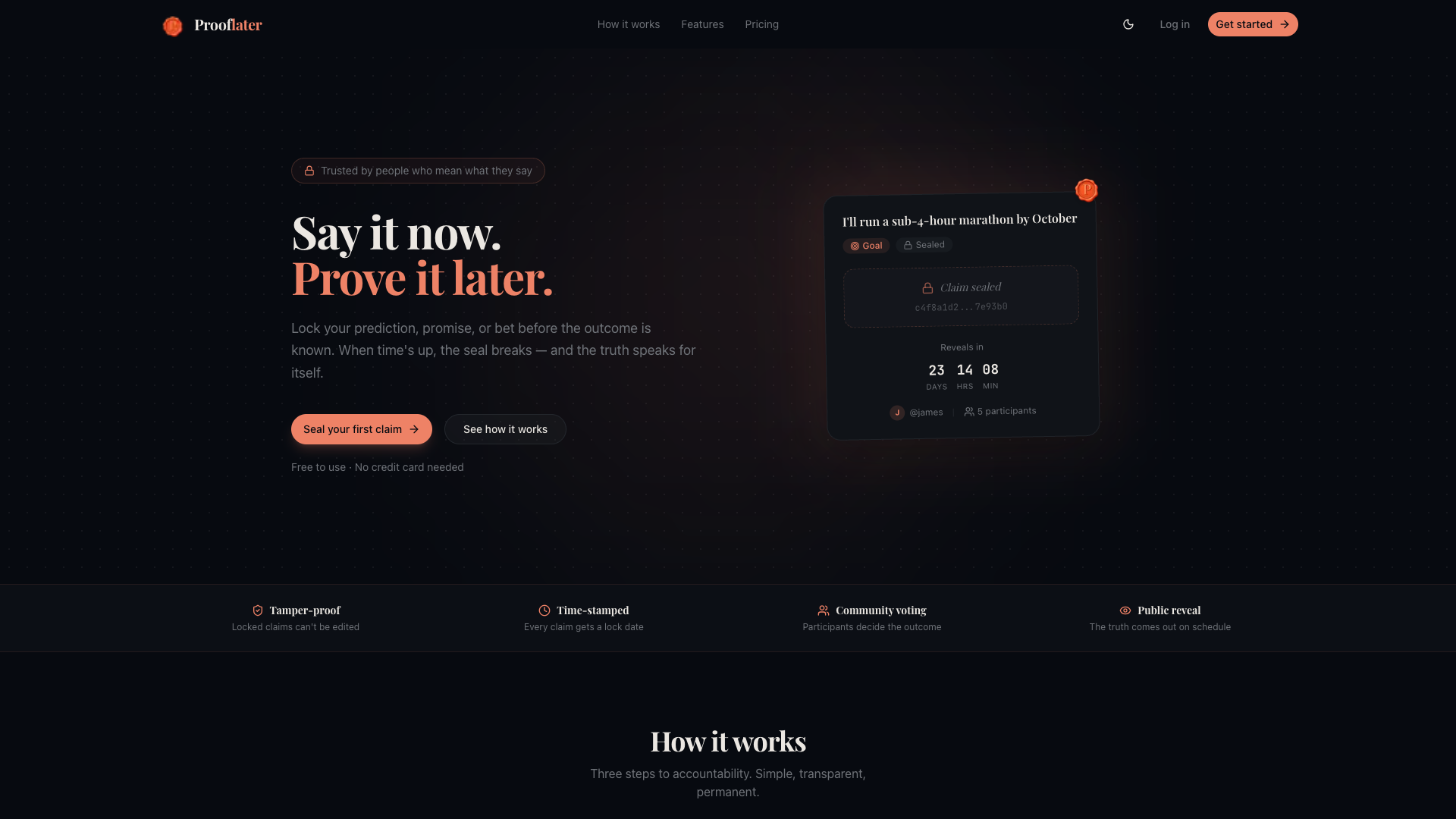

Prooflater

A tamper-proof prediction and accountability platform where users seal claims with SHA-256 cryptographic hashing, set countdowns, and reveal outcomes with community voting.

Developer Tools

OGCOPS

A free, open-source OG image generator with 109+ templates, real-time preview across 8 social platforms, and a CORS-open API — no login required.

E-commerce

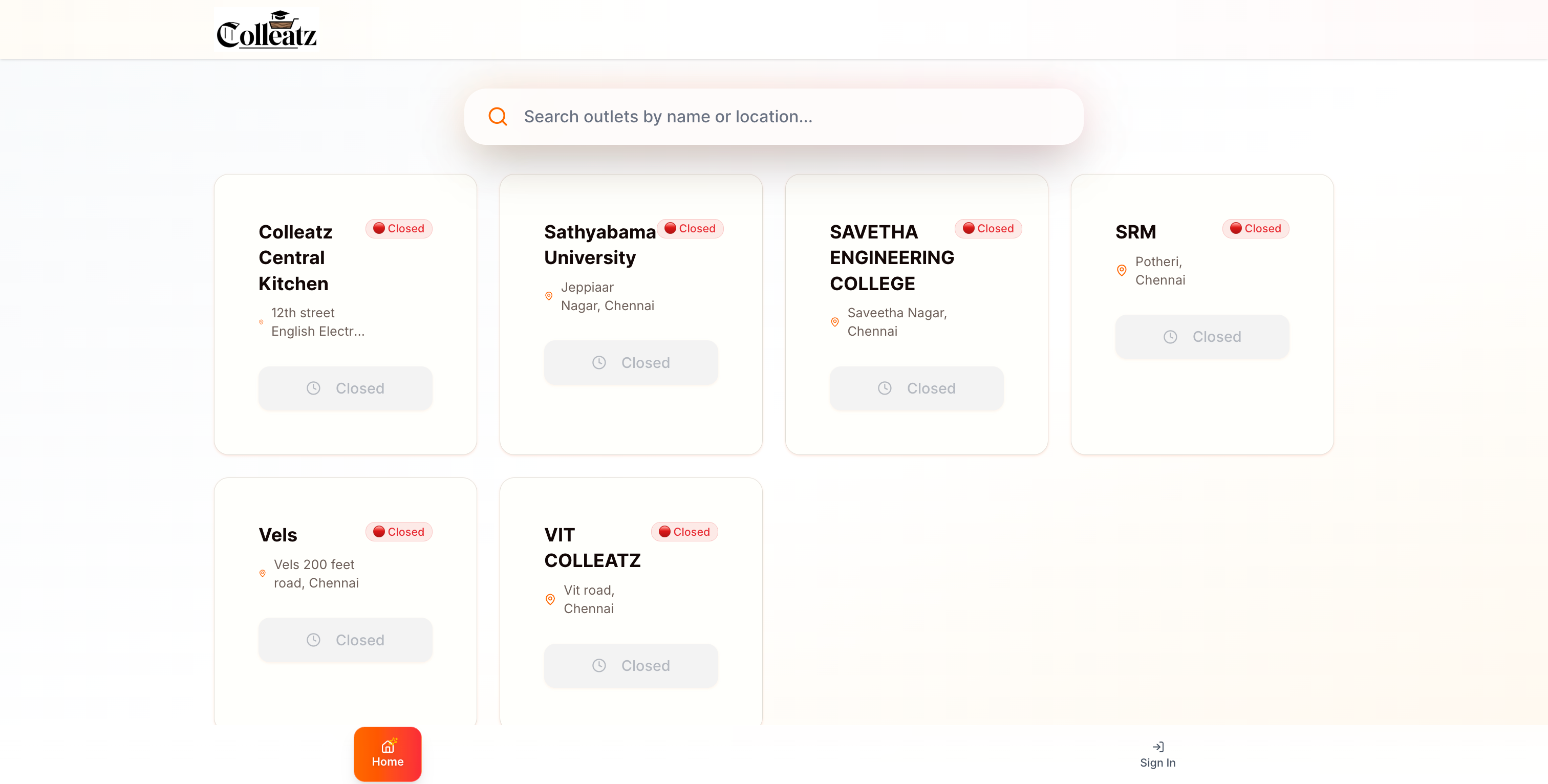

Colleatz

A modern food delivery and ordering platform enabling users to browse, order, and track food from multiple restaurant outlets with real-time updates.