Web Development · Infrastructure

WASI 0.3 Brings Native Async I/O to WebAssembly — What It Means for Server Workloads in 2026

WASI 0.3 introduces native async I/O, stream types, and full socket support to WebAssembly. We analyze the Component Model changes, language support, and why this release finally makes Wasm viable for production server workloads.

Anurag Verma

15 min read

Sponsored

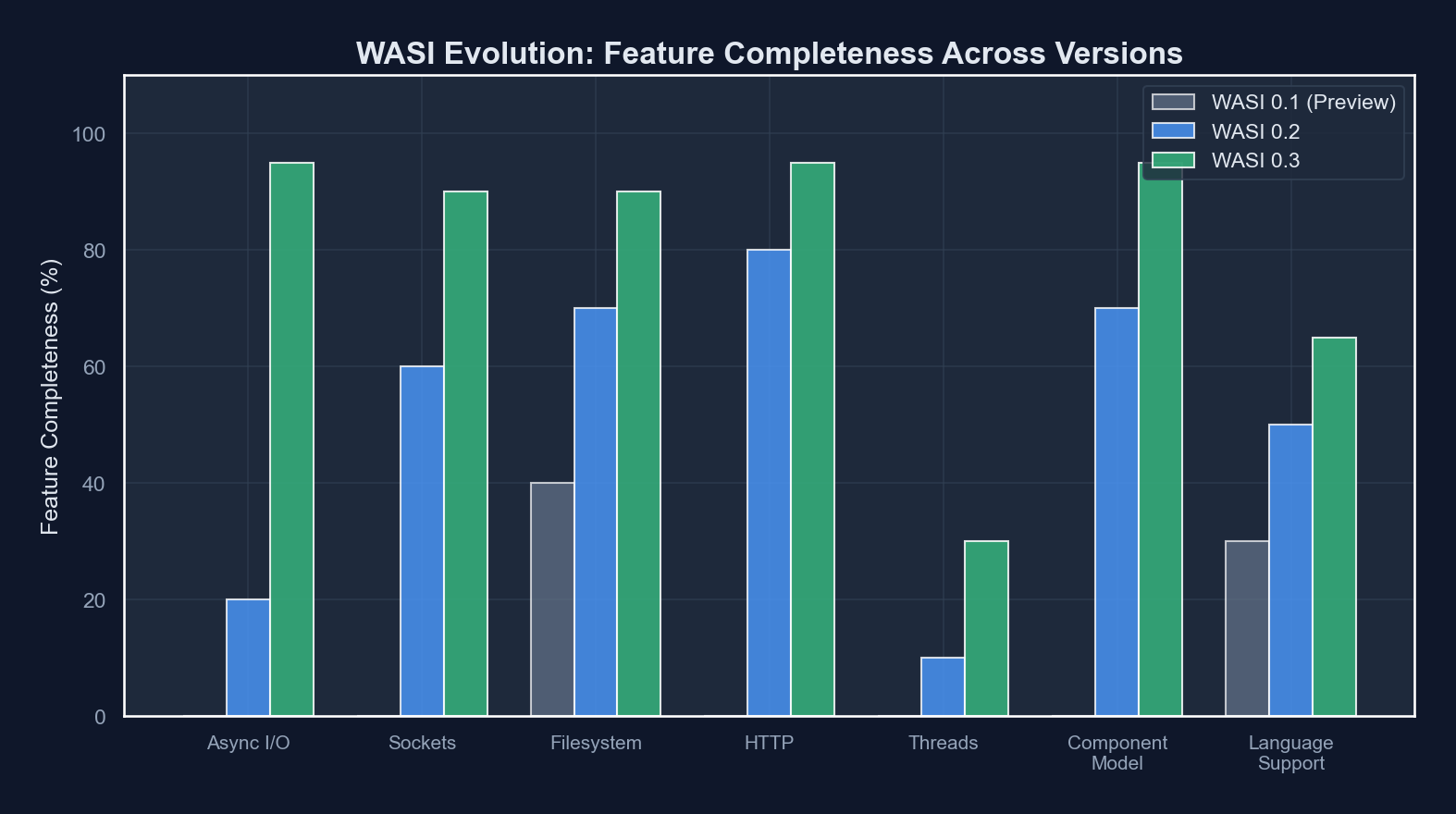

WASI 0.2 shipped in January 2024 with a bold promise: a portable, capability-based system interface for WebAssembly outside the browser. Fourteen months later, the numbers tell a clear story about what was missing. Bytecode Alliance survey data from mid-2025 showed that 68% of developers who evaluated WASI 0.2 for server-side workloads cited the lack of async I/O as their primary reason for not adopting it in production. Fermyon’s internal benchmarks revealed that synchronous-only HTTP handlers in WASI 0.2 topped out at roughly 40% of the throughput achievable with equivalent native async Rust services. The gap was not about raw compute. It was about I/O multiplexing, or rather, the absence of it.

WASI 0.3, which reached its first stable milestone in late 2025, addresses this head-on. It introduces native async primitives, stream and future types at the Component Model level, full TCP/UDP socket support, and a redesigned filesystem interface. This is not an incremental patch. It is the release that determines whether WebAssembly becomes a serious contender for server-side infrastructure or remains confined to edge functions and plugin systems.

This post breaks down what changed, why it matters, and where the rough edges still are.

The WASI 0.3 async model introduces stream and future types directly into the Component Model’s type system

The WASI 0.3 async model introduces stream and future types directly into the Component Model’s type system

The Problem WASI 0.2 Could Not Solve

To understand WASI 0.3, you need to understand why WASI 0.2’s I/O model was fundamentally insufficient for server workloads.

WASI 0.2 used the Component Model’s wasi:io/poll interface for all non-blocking operations. The mechanism was a pollable resource: a handle you could pass to poll.poll() to block until one or more I/O sources were ready. In theory, this enabled concurrency. In practice, it created severe ergonomic and performance problems.

The core issue was that pollable was a synchronous polling primitive. A component could not yield control back to the host runtime while waiting for I/O. Instead, it had to call poll.poll(), which blocked the entire component instance until data arrived. This meant that to handle multiple concurrent connections, the host had to spin up multiple component instances (one per connection) with all the memory overhead that entails.

| Capability | WASI 0.2 | WASI 0.3 |

|---|---|---|

| HTTP request handling | Synchronous only, one request per instance | Native async, multiplexed within a single instance |

| TCP sockets | Not supported | Full wasi:sockets/tcp with async read/write streams |

| UDP sockets | Not supported | wasi:sockets/udp with datagram streams |

| Filesystem access | Blocking reads/writes via wasi:filesystem | Async streams for file I/O, non-blocking by default |

| Concurrency model | poll.poll() blocking primitive | stream<T>, future<T> as first-class types |

| Inter-component communication | Synchronous function calls only | Async function calls with backpressure |

| Memory overhead per connection | Full instance clone (~2-8 MB) | Shared instance, ~50-200 KB per task |

The numbers in that last row are approximate and vary significantly by runtime and workload, but the order-of-magnitude difference is consistent across published benchmarks from Wasmtime, WAMR, and WasmEdge teams.

How WASI 0.3 Async Actually Works

WASI 0.3 does not bolt async onto the existing system. It modifies the Component Model itself to support asynchronous operations as a first-class concept. This is a critical architectural decision: async is not a library feature, it is a type-system feature.

Stream and Future Types

The Component Model in WASI 0.3 introduces two new built-in types:

stream<T>: A potentially infinite sequence of values of typeT, produced and consumed asynchronously with backpressure.future<T>: A single value of typeTthat will be available at some point in the future.

These types exist at the WIT (WebAssembly Interface Types) level, meaning they are part of the interface definition language that components use to declare their imports and exports. When a component exports an async function, the runtime and the calling component both understand the async contract at the type level.

Here is what a simple HTTP handler looks like in WIT under WASI 0.3:

package example:http-handler;

interface handler {

use wasi:http/types.{incoming-request, outgoing-response};

handle: async func(request: incoming-request) -> outgoing-response;

}The async keyword on the function signature tells the Component Model that this function may yield control back to the caller while it awaits I/O. The runtime can then schedule other work (other requests, other component invocations) on the same underlying thread.

The Canonical ABI for Async

Under the hood, async functions in the Component Model use a callback-based canonical ABI. When a component calls an async import, the runtime:

- Starts the async operation.

- Returns a

taskhandle to the calling component. - Notifies the component via a

callbackexport when the operation completes.

This is lower-level than most developers will interact with directly. Language toolchains (discussed below) generate the appropriate async/await sugar. But the callback-based ABI is important because it means the runtime has full control over scheduling. It can use epoll, io_uring, kqueue, or any other platform-specific mechanism without the component needing to know.

// Rust guest code using wasi 0.3 async (conceptual example)

// The wit-bindgen macro generates the async plumbing

#[async_trait]

impl Handler for MyHandler {

async fn handle(request: IncomingRequest) -> OutgoingResponse {

// This await yields control back to the host runtime

let db_result = database::query("SELECT * FROM users").await;

let body = serde_json::to_vec(&db_result).unwrap();

OutgoingResponse::new(200, body)

}

}Socket Support: TCP, UDP, and Name Resolution

WASI 0.2 had a notable gap: no direct socket API. You could make outbound HTTP requests through wasi:http/outgoing-handler, but you could not open a raw TCP connection, listen on a port, or send UDP datagrams. This made it impossible to run database drivers, custom protocol implementations, or any networked service that was not purely HTTP-based.

WASI 0.3 fills this gap comprehensively.

The Socket Interfaces

// wasi:sockets/tcp

interface tcp {

resource tcp-socket {

// Bind, listen, accept — the standard server lifecycle

bind: func(network: borrow<network>, local-address: ip-socket-address) -> result<_, error-code>;

listen: func() -> result<_, error-code>;

accept: async func() -> result<tuple<tcp-socket, stream<u8>, stream<u8>>, error-code>;

// Client connections

connect: async func(network: borrow<network>, remote-address: ip-socket-address)

-> result<tuple<stream<u8>, stream<u8>>, error-code>;

}

}The key detail here is that accept and connect return stream<u8> handles for reading and writing. These streams integrate directly with the Component Model’s async machinery: reading from a socket stream is an async operation that yields control, and the runtime handles the underlying epoll/kqueue registration transparently.

Capability-Based Security

Socket access in WASI 0.3 remains capability-based, consistent with WASI’s security model. A component cannot open arbitrary network connections. It must be explicitly granted a network capability by the host, and the host can restrict which addresses and ports are accessible.

This is a meaningful security property for multi-tenant environments. A Wasm component running in a serverless platform can be given access to specific database endpoints without being able to scan the internal network, a guarantee that is difficult to enforce with traditional container isolation.

Filesystem Changes

The filesystem interface in WASI 0.3 receives two significant updates.

First, file read and write operations now return stream<u8> instead of blocking with synchronous calls. This means file I/O participates in the same async scheduling as network I/O. A component can read from a file and a socket concurrently without threads.

Second, the path_open capability model has been simplified. WASI 0.2’s filesystem API inherited complexity from the original WASI Preview 1 design, with fine-grained rights flags (rights_fd_read, rights_fd_write, etc.) that were difficult to use correctly. WASI 0.3 replaces this with a simpler directory-based capability model: a component receives access to specific directory handles, and all operations within those directories are permitted.

# Running a WASI 0.3 component with filesystem access in Wasmtime

wasmtime run --dir /data/uploads::/uploads --dir /tmp::/scratch my-component.wasm

# The component sees /uploads and /scratch as its filesystem roots

# No access to /etc, /home, or any other host pathLanguage Support Status

The practical value of WASI 0.3 depends entirely on whether your language toolchain supports it. As of February 2026, the landscape is uneven.

| Language | WASI 0.3 Support | Async Support | Component Model | Production Readiness |

|---|---|---|---|---|

| Rust | Full (via cargo-component 0.20+) | Native async/await maps directly | Full, via wit-bindgen | Production-ready |

| Go | Partial (TinyGo 0.34+) | Goroutines mapped to Wasm tasks | Experimental | Early adoption |

| Python | Partial (componentize-py 0.16+) | asyncio event loop integration | Supported | Experimental |

| JavaScript | Partial (jco / ComponentizeJS) | Promise-based mapping | Supported | Experimental |

| C/C++ | WASI SDK 25+ | Manual callback registration | Via wit-bindgen-c | Functional but verbose |

| .NET | Experimental (dotnet-wasi-sdk) | Task/async mapping in progress | Limited | Not production-ready |

Rust: The Reference Implementation

Rust remains the most mature path to WASI 0.3. The cargo-component tool generates all the necessary bindings, and Rust’s native async/await maps cleanly onto the Component Model’s async primitives. The wit-bindgen crate handles the callback ABI, so Rust developers write standard async code.

// Cargo.toml for a WASI 0.3 component

[package]

name = "my-http-service"

version = "0.1.0"

edition = "2021"

[dependencies]

wit-bindgen = "0.39"

serde = { version = "1", features = ["derive"] }

serde_json = "1"

[lib]

crate-type = ["cdylib"]

[package.metadata.component]

package = "example:my-http-service"

target = "wasi:http/proxy@0.3.0"Go: The Goroutine Challenge

Go’s concurrency model (goroutines and channels) does not map trivially onto the Component Model’s async primitives. TinyGo’s WASI 0.3 support handles this by implementing a cooperative scheduler that maps goroutines onto Wasm async tasks. The approach works, but garbage collection pauses can cause latency spikes under high concurrency, and not all standard library packages are available.

Python and JavaScript: The Interpretation Layer

Python and JavaScript face a different challenge: they are interpreted languages running inside a Wasm-compiled interpreter. For Python, componentize-py embeds a CPython build compiled to Wasm, which means the Wasm module is large (15-30 MB) and cold-start times are measured in hundreds of milliseconds. JavaScript via ComponentizeJS uses a similar approach with a StarlingMonkey engine.

The async story for both languages is functional but indirect. Python’s asyncio event loop is wired into the Component Model’s task system, so await in Python correctly yields to the host runtime. But the overhead of running an interpreter inside Wasm means these languages are better suited to orchestration logic than hot-path computation.

The Component Model: Composition Over Compilation

WASI 0.3’s async primitives are not just about individual component performance. They enable a composition model that was impractical under WASI 0.2.

In the Component Model, components are composed together by wiring their imports and exports. Under WASI 0.2, all inter-component calls were synchronous: component A calls component B, A blocks until B returns. This meant that composing a pipeline (A calls B calls C calls D) could block a thread for the entire chain, and any I/O in the middle of the chain stalled everything upstream.

With WASI 0.3, inter-component calls can be async. Component A can call an async function on component B, yield while B does its work, and handle other tasks in the meantime. This makes it practical to compose complex service architectures from independent components without sacrificing concurrency.

WASI 0.3 enables async boundaries between composed components, allowing the host runtime to multiplex I/O across the entire composition graph

WASI 0.3 enables async boundaries between composed components, allowing the host runtime to multiplex I/O across the entire composition graph

Practical Example: An Edge API Gateway

Consider an edge API gateway composed from three components:

- Auth component (Rust): validates JWTs, makes async calls to a key server.

- Rate limiter (Rust): checks request rates against an in-memory sliding window.

- Router (Go): routes requests to upstream services via async HTTP calls.

Under WASI 0.2, each incoming request would block a component instance through the entire auth-rate-limit-route pipeline. Under WASI 0.3, the auth component can yield while waiting for the key server, the rate limiter runs synchronously (no I/O needed), and the router yields while waiting for the upstream response. A single host thread can service dozens of in-flight requests across multiple composed instances.

Runtime Support

Not all Wasm runtimes support WASI 0.3 equally.

Wasmtime (Bytecode Alliance) has the most complete implementation, which is expected given that much of the WASI 0.3 specification was developed alongside Wasmtime’s async executor. Wasmtime 29+ supports the full async canonical ABI, including stream and future types, async component composition, and the socket interfaces.

WasmEdge added WASI 0.3 support in late 2025, focusing initially on the async HTTP and socket interfaces. Their implementation uses a tokio-based host executor and performs competitively with Wasmtime on HTTP workloads.

Wazero (Go-native runtime) has partial support. The synchronous WASI 0.3 interfaces work, but the async canonical ABI support is still in development. This is a limitation worth noting if your host application is written in Go and you want to avoid CGo.

Browser runtimes do not implement WASI 0.3. The WASI socket and filesystem interfaces are server-side concepts. Browser-based Wasm continues to use the Web APIs (fetch, WebSocket, File API) directly.

Performance Characteristics

Early benchmarks from the Wasmtime team and independent evaluations paint a nuanced picture.

For HTTP request handling, WASI 0.3 components running in Wasmtime achieve 70-85% of the throughput of equivalent native async Rust services. The gap comes primarily from the canonical ABI overhead. Marshaling data across the component boundary adds latency, particularly for large request/response bodies.

For compute-bound workloads (image processing, cryptography, data transformation), WASI 0.3 components run at 90-98% of native speed, consistent with WASI 0.2 performance. The async machinery adds negligible overhead when there is no I/O to yield on.

Cold start times remain a strength. A Rust-based WASI 0.3 component typically instantiates in 1-5 milliseconds, compared to 50-200 ms for a container and 200-1000+ ms for a JVM-based service. This makes Wasm components attractive for serverless and scale-to-zero architectures.

Memory efficiency is where the async model shows its biggest gains over WASI 0.2. A Wasmtime instance handling 1,000 concurrent HTTP connections with WASI 0.3 uses approximately 150-300 MB of memory, compared to 2-8 GB under WASI 0.2’s one-instance-per-connection model.

What Is Still Missing

WASI 0.3 is a major step forward, but it does not solve everything. A candid assessment of the remaining gaps:

No standardized database interface. There is no wasi:sql or wasi:kv interface in the WASI 0.3 specification. Database access requires either raw TCP sockets (and a Wasm-compiled database driver) or a host-provided custom interface. The wasi-cloud proposal includes key-value and SQL interfaces, but these are not part of the core WASI 0.3 release.

Limited observability. There is no standard interface for emitting traces, metrics, or structured logs. Components can write to stderr, but integration with OpenTelemetry or similar systems requires host-specific extensions.

Threading is out of scope. WASI 0.3’s async model is cooperative, not preemptive. There is no shared-memory threading (the Wasm threads proposal is orthogonal). CPU-bound workloads cannot be parallelized within a single component instance. You must use multiple instances for parallelism, which the host runtime can schedule across OS threads.

Ecosystem immaturity. The number of production-quality Wasm-compiled libraries remains small compared to native ecosystems. Database drivers, TLS implementations, and serialization libraries exist but are not always maintained at the same level as their native counterparts.

Should You Adopt WASI 0.3 Today?

The answer depends on your workload and your tolerance for ecosystem friction.

Strong fit: Edge computing, plugin/extension systems, multi-tenant SaaS platforms, serverless functions with sub-10ms cold start requirements, and polyglot service composition. If you are already invested in the Bytecode Alliance ecosystem and working primarily in Rust, WASI 0.3 is production-viable today.

Reasonable fit with caveats: Internal microservices where you control the deployment environment and can accept some library gaps. Go and Python workloads where you can tolerate TinyGo or componentize-py limitations.

Not yet a fit: Workloads that depend heavily on database connectivity without host-provided interfaces, applications requiring shared-memory parallelism, or teams that need mature observability tooling out of the box.

WASI 0.3 is the release that moves WebAssembly outside the browser from “interesting experiment” to “credible infrastructure option.” The async I/O story is sound. The Component Model composition model is genuinely novel. The security properties are strong. But the ecosystem (libraries, tooling, runtime parity) still needs time to mature.

The trajectory is clear. Whether WASI reaches critical mass in 2026 or 2027 depends less on the specification itself and more on whether the ecosystem can close the library and tooling gaps that remain. For teams building new infrastructure with a multi-year horizon, evaluating WASI 0.3 now is a pragmatic decision, not a speculative one.

Sponsored

More from this category

More from Web Development

Sponsored

Discussion

Join the conversation.

Comments are powered by GitHub Discussions. Sign in with your GitHub account to leave a comment.

Sponsored